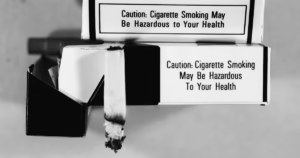

What Big Tobacco teaches us about delay, doubt, and the accelerating risk curve of artificial intelligence

From the Craig Bushon Show Media Team

For decades, one of the most profitable industries in America operated behind a simple strategy. It was not to prove their product was safe. It was to make sure no one could prove it was dangerous fast enough to stop them.

The tobacco industry understood something fundamental about human behavior, public policy, and markets. If you can keep the science unsettled, you can keep the revenue flowing.

That strategy worked for years. Even as evidence mounted linking smoking to lung cancer and other diseases, the public messaging stayed consistent. More studies were needed. The science was not conclusive. Correlation did not equal causation.

And while that conversation continued, consumption scaled.

By the time the warnings became unavoidable and regulation caught up, the damage was already widespread and deeply embedded into society.

That is the pattern. Not the product, but the pattern.

Now shift that lens to artificial intelligence.

AI is being introduced into nearly every layer of modern life at a speed that is difficult to fully process. It is in hiring systems, financial markets, education, healthcare recommendations, national defense conversations, and the content people consume every day.

At the same time, you hear a familiar refrain.

We need more research.

We are still understanding the risks.

The technology is complex.

All of that is true.

But it is also the same structural environment where delay benefits the people moving the fastest.

This is not about accusing anyone of bad intent. It is about recognizing incentives.

In the current system, the companies that scale AI the fastest gain the most advantage. Market share, infrastructure control, data accumulation, and long term dominance all reward speed. Caution, on the other hand, is not directly rewarded in the same way.

So even responsible voices calling for thoughtful development exist alongside massive investment and rapid deployment. Those two realities are not in conflict. They are happening at the same time.

That is exactly what makes this moment worth paying attention to.

There is another layer to this that matters even more.

With tobacco, risk was not immediate for everyone. Some people smoked for years and appeared fine. That reality became part of the defense. It softened the perception of danger and gave people a reason to dismiss broader warnings.

But public health is not measured by exceptions. It is measured by outcomes across populations.

The same principle applies here.

AI does not present risk in a single moment. It scales with exposure.

At low levels, it helps with productivity. Writing, research, scheduling, and routine tasks become easier.

At the next level, AI begins to influence decisions. Hiring filters, legal guidance, medical suggestions, and the shaping of information flows start to shift how people think and act.

At higher levels, dependence becomes structural. Critical systems, economic decisions, infrastructure, and even national security functions begin to rely on AI driven processes.

That is where the risk curve steepens.

Not because of a single failure, but because of cumulative reliance.

The more a system depends on something it does not fully control or understand, the more fragile that system becomes under stress.

This is where the comparison becomes more than an analogy.

It becomes a model.

Limited use enhances capability.

Expanded use reshapes behavior.

Full dependence changes the structure of decision making itself.

And once that level of integration is reached, stepping back is no longer simple.

There is also a scale difference that cannot be ignored.

Cigarettes affected individual health.

AI has the potential to affect entire systems at once.

Economies can shift. Labor markets can compress. Information environments can be influenced in ways that are difficult to detect in real time. Decision making authority can gradually move away from individuals without a clear moment where anyone notices the transition.

That does not mean collapse is inevitable. It means the stakes are higher and the margin for error is smaller.

One of the most overlooked dynamics in all of this is timing.

Regulation historically follows impact, not anticipation. By the time rules are put in place, the system they are trying to regulate is already established.

With tobacco, that meant decades of delay.

With AI, the cycle is moving faster.

Infrastructure is being built now. Data centers, energy agreements, chip supply chains, and integration into business operations are all accelerating at a pace that outstrips traditional policy response.

By the time a consensus forms around what the risks fully are, the dependency may already be locked in.

That is how systems behave when growth outpaces governance.

None of this leads to a simple conclusion like stop AI or AI is bad.

The real question is whether we recognize the pattern early enough to respond differently than we did before.

Because the lesson from tobacco was never just about cigarettes.

It was about what happens when uncertainty becomes useful, when delay becomes profitable, and when scale outruns accountability.

We are not looking at the same product.

But we may be looking at the same playbook.

And if that is true, the most important decisions are not the ones made after everything is proven beyond doubt.

They are the ones made while the evidence is still forming and the system is still being built.

That is the moment we are in now.

This isn’t about fear. It’s about awareness.

We’ve seen what happens when industries move faster than accountability. We’ve seen what happens when doubt is used to delay action. And we’ve seen the cost of waiting until the damage is undeniable.

AI is not cigarettes. But the behavior around it should look familiar.

The difference this time is scale. Speed. And how deeply it can embed itself into everyday life before most people realize what has changed.

So the real question isn’t whether AI will shape the future. It already is.

The question is whether we stay ahead of it or wake up one day trying to catch up to something that has already reshaped the rules.

Because we don’t just follow the headlines… we read between the lines to get to the bottom line of what’s really going on.

Disclaimer

This piece is for informational purposes only. It does not claim that artificial intelligence causes the same harm as tobacco or that any company or industry is acting with intent or misconduct.

The comparison is used to highlight patterns in how risk, uncertainty, and market incentives can develop over time. AI is a different technology with both benefits and risks, and future outcomes will depend on how it is developed, governed, and used.

Readers are encouraged to review multiple perspectives and draw their own conclusions.